All About k3s - Lightweight Kubernetes

Open Source Enthusiast || DevOps Practitioner || Cloud Computing || Tech Blogger || CNCF Ambassador || AWS Community Builder

Kubernetes is an open source container-orchestration tool which is widely used in the industry for automating application deployments, auto-scaling and management. Creating a Kubernetes(k8s) cluster is quite a tedious task. But its not anymore if using k3s - Lightweight Kubernetes. Just within few commands your cluster is up and running. Let's dig a little deeper.

K3s & its History

K3s is an opensource, lightweight distribution of Kubernetes which is packaged all in a binary less than 100mb. It was initially launched as component of Rio, an experimental project launched by Rancher Labs, but due to its efficiency and high demand, they launched it as a separate open source project. K3s was officially launched on 26 Feb, 2019. It has been seen a great success of k3s in Edge, IoT, CI, Development, ARM and Embedding K8s. It can be installed as single node as well as multi-node cluster and is fully conformant production-ready Kubernetes distribution.

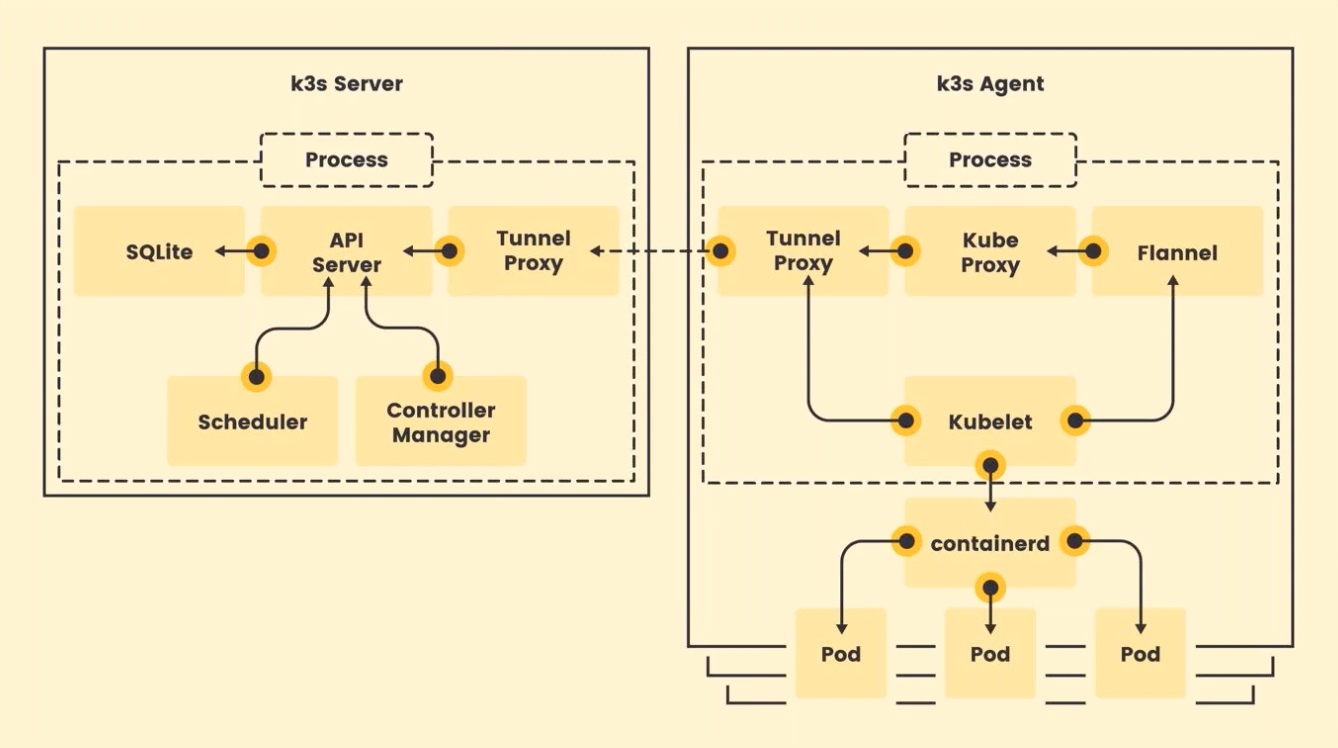

K3s Architecture

The above image shows the basic architecture of k3s. You can observe that in case of k3s we have two major components, k3s server and k3s agent as like of master node and worker node in k8s. In both server and agent, all other components combined together run as a single process which makes it really lite-weighted unlike in k8s where each component runs as a individual process. In fact in case of single node cluster, both the server and agent can run as a single process in a single node which helps to spin up the cluster within 90s. Most of the components are similar to that used in k8s and their functionalities are also pretty much similar as that of in k8s except SQLite, Tunnel Proxy and Flannel which are introduced in k3s. For detailed understanding of the individual components please have a look at k8s documentation. Talking about SQLite, it is a replacement of etcd which is used in k8s, and removing dependency from etcd allowed running single node cluster with SQLite but its only in case of single node. K3s uses external databases in case of HA k3s cluster. Coming to Tunnel Proxy, basically kube-proxy uses a number of ports for connecting with api-server, but in case of k3s, it connects to api-server with the help of tunnel proxy. Behind the scene, tunnel proxycreates a uni-directional connection to reach out api-server but once the connection is established, bi-directional communication is enabled and this makes a more secure connection by using single port for communication. For cluster networking, Flannel can be seen in the picture which is used as Container Network Interface(CNI) in k3s. So this is a general insight of k3s architecture. For more detailed information please refer to documentation.

Cluster Setup using k3s

Setting up a Kubernetes(k8s) cluster is quite a hectic task but not in the case of k3s. We can easily set up the k3s single node or multi node clusters just within few commands. In this blog, we will discover both the methods starting with single node setup.

Single Node Cluster

Creating a single node k3s cluster or setting up k3s server is just a piece of cake. We just need deploy a single command and it will create k3s server for you. Please execute the following command -

curl -sfL https://get.k3s.io | sh -s - --write-kubeconfig-mode 644

In the above command, we have used an extra flag i.e, --write-kubeconfig-mode. Basically by default the KUBCECONFIG file which is located at /etc/rancher/k3s/k3s.yaml have only read permissions for root user. So we need to make it readable for other users as well (the recommended method). That's all, we have successfully setup our k3s single node cluster.

Multi Node Cluster

Setting up multi node cluster is also very simple in k3s. We can have as many no. of worker nodes for our master in just few commands. Please follow the steps to configure your High Availability(HA) production grade k3s cluster. Step-1. Firstly we need to set up the server as we did in case of single node cluster by using the same command -

curl -sfL https://get.k3s.io | sh -s - --write-kubeconfig-mode 644

Step-2. After that, from the below command, we need to find the node-token, which will be further used by the k3s-agent to add as many worker nodes you want for your cluster.

sudo cat /var/lib/rancher/k3s/server/node-token

Step-3. The next command is used to create a k3s-agent for the master node which we set up from the above command and we will use the Server IP and node-token extracted from the master node. Please replace my-server and node-token from the master node IP and token which we generated from the above command. Please execute the following command in a separate instance/system to make it worker node for the master created.

curl -sfL https://get.k3s.io | K3S_URL=https://my-server:6443 K3S_TOKEN=node-token sh -

Bingo! We have successfully set up the HA production grade k3s cluster. Now we are ready to begin with our deployments on k3s cluster.

Now, after we understood different ways of setting up the cluster, let's see the advantages of using k3s and does it really used by the industry?

Benefits of k3s

Quick Installation,

Less Resources Required,

Easy to setup cluster,

Smaller in size,

Very easy to add/remove nodes, etc. These are some of the advantages of having k3s clusters. Now the question arises, Is it really used in the Industry?

Companies using k3s

Due to its easy configurations and flexible nature as it can support something with as small as Raspberry Pi or something as large as an AWS a1.4xlarge 32GiB server, it is highly adopted by organizations in their production. Some of the companies using k3s are -

Civo - The cloud native service provider

Qubitro - IoT platform to design and develop IoT projects.

LoyaltyHarbour - Provide support for eCommerce stores.

TWave - Condition Monitoring Systems

Lunar Ops, Inc - DevOps as a Service company based in Bronx, NY

So, these are few of the companies using k3s in their production.

I hope the blog was worth of your precious minutes you invested. Feel free to like, share and comment down your thoughts about the blog.